Changing faces - projection mapping

2018, Sep 19

I partnered with Mengzhen for this project.

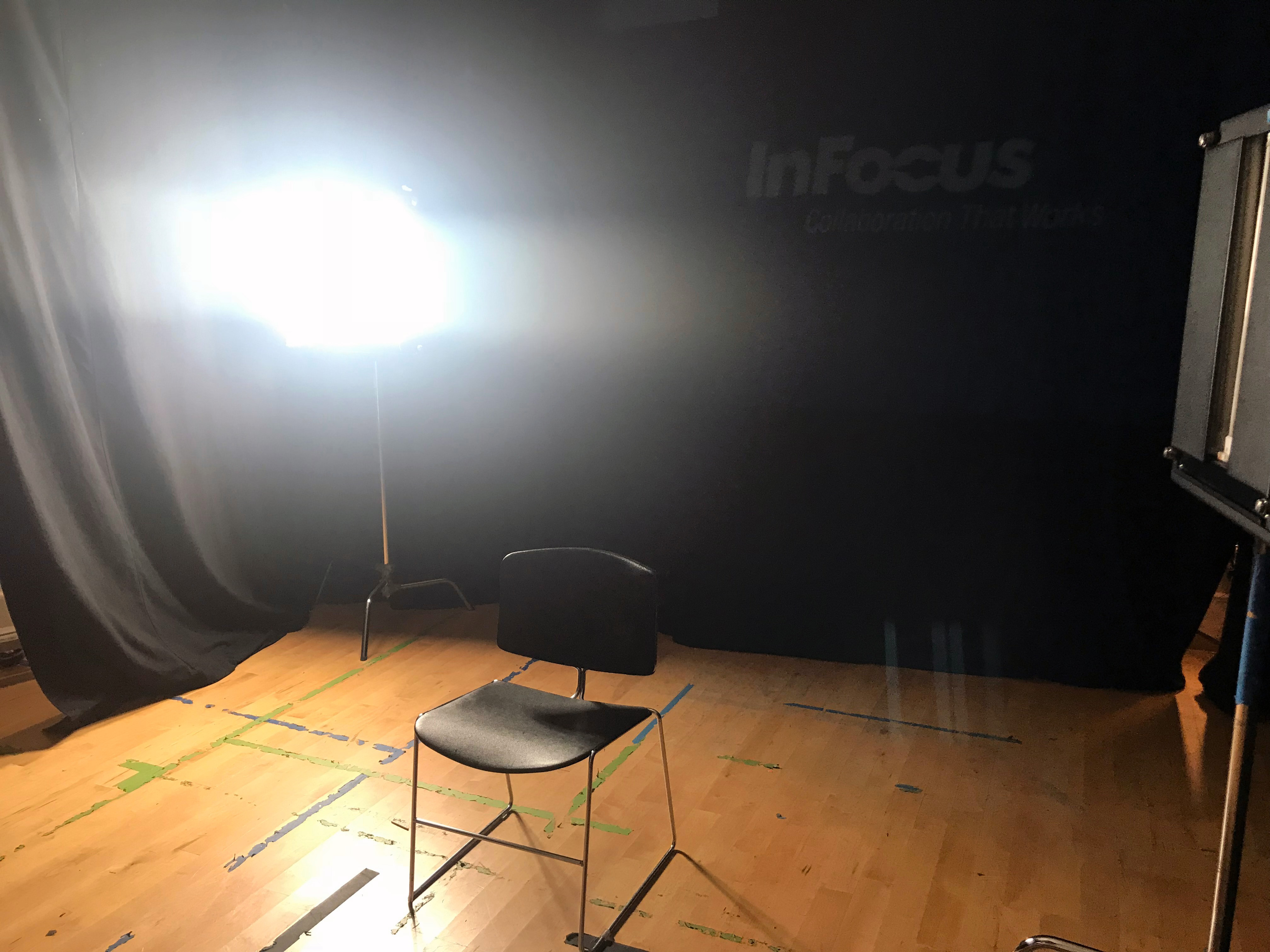

Initially we wanted to project markup to users’ face and they can switch to different set of markup looks. We did a quick test run for this idea. We found out the projection on the face is too bright for the idea to work.

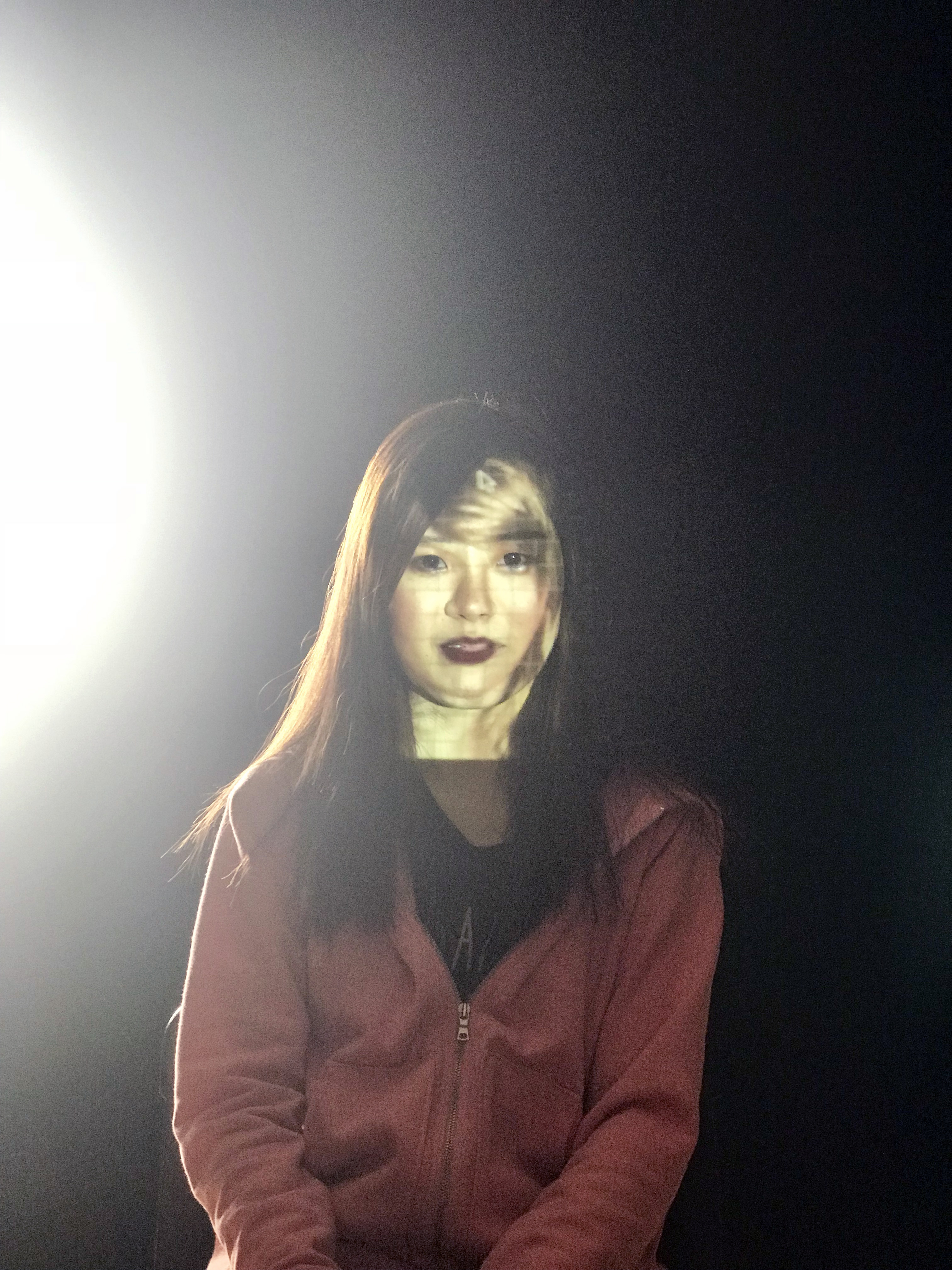

After that test run, we decided to project a face onto our face to create a new look. Mengzhen picked Taylor Swift and we projected her face on our face. Here is the results:

After testing out Taylor’s face, we wanted to try out a collection of faces and using our voice as user input.

The tech stack we used is

- Processing

- Keystone

- Web sockets - webkitSpeechRecognition

Here are the videos