Sketch To 3D

For midterm project, My partner, Mengzhen, and I want to explore machine learning sketches to 3d models.

Tech Stack

Process

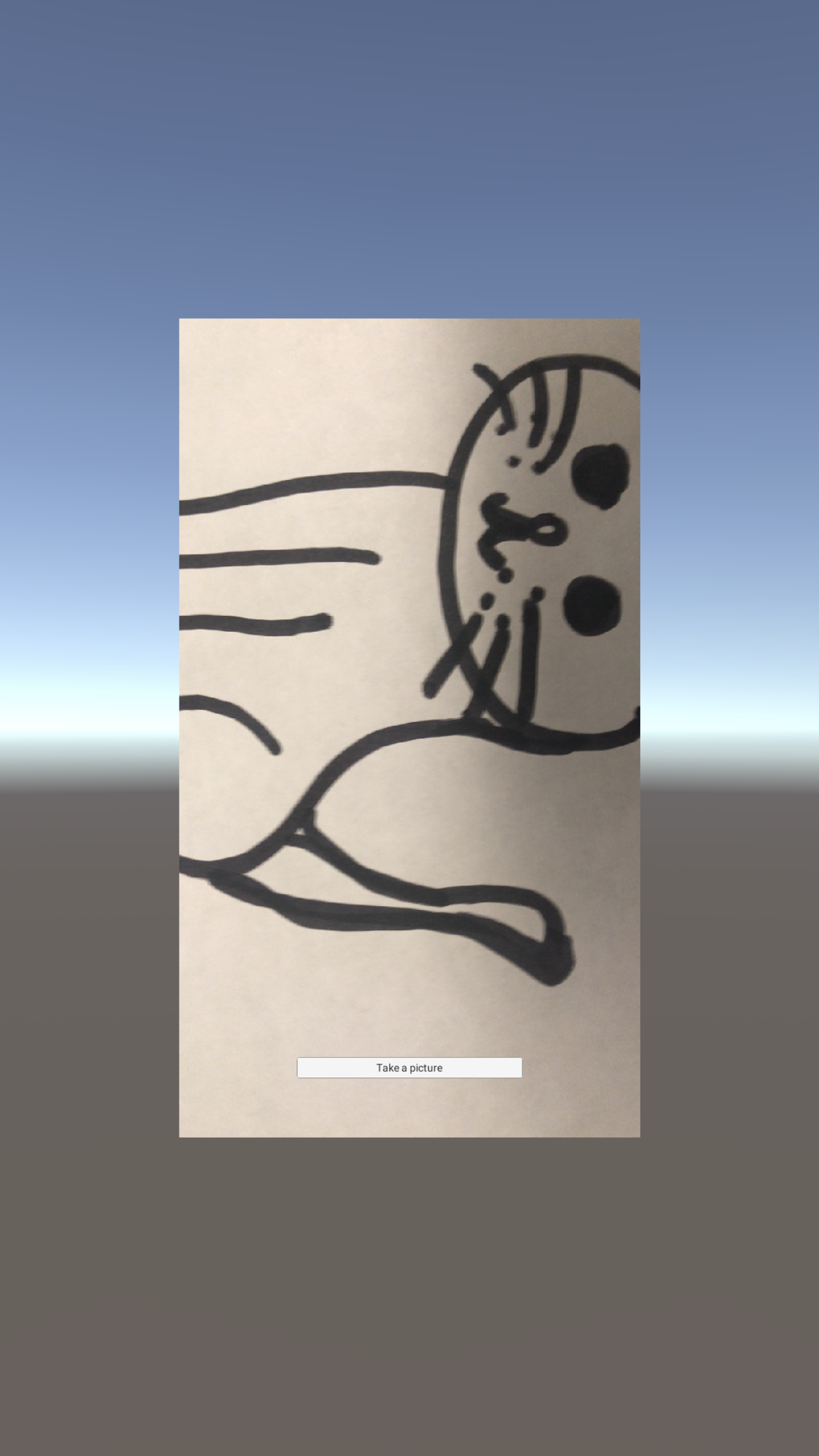

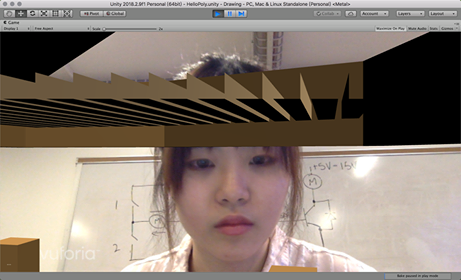

We tried to getting access to the camera through Unity and Vuforia. We wanted to try different ways the cameras of the devices. In Unity, you can list all the cameras and select one to apply as a texture to a game object.

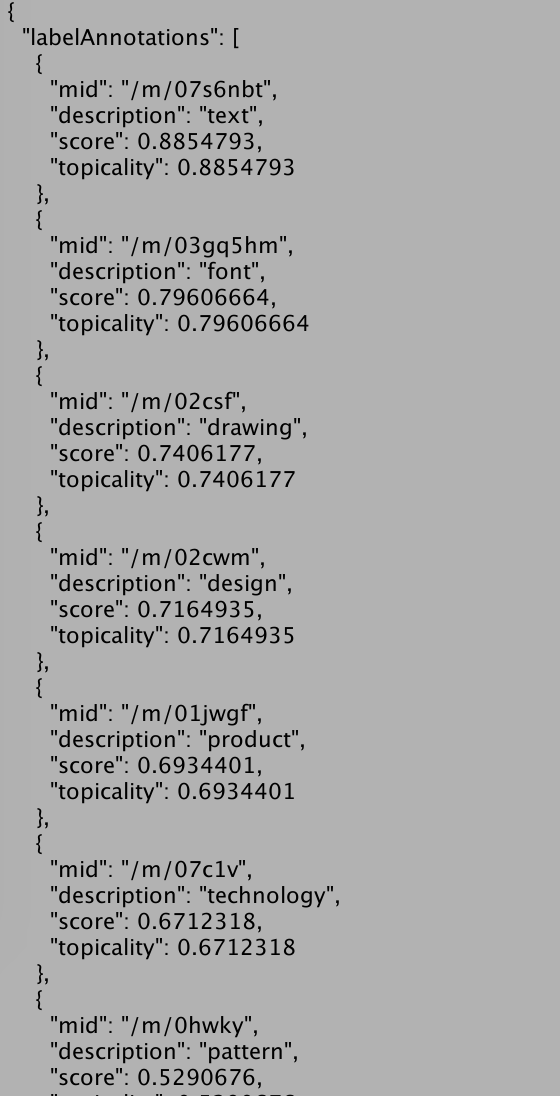

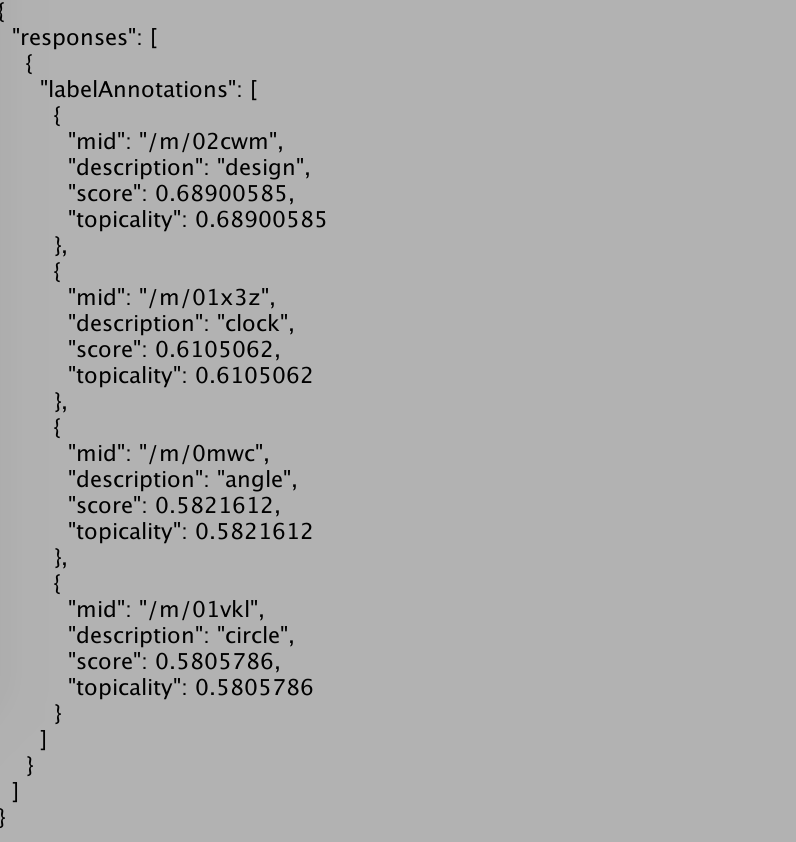

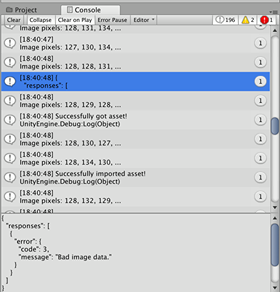

Once we have access, we used Google Cloud vision, because it applies trained model for image recognition. We packed JSON data for the vision API call: Image annotate

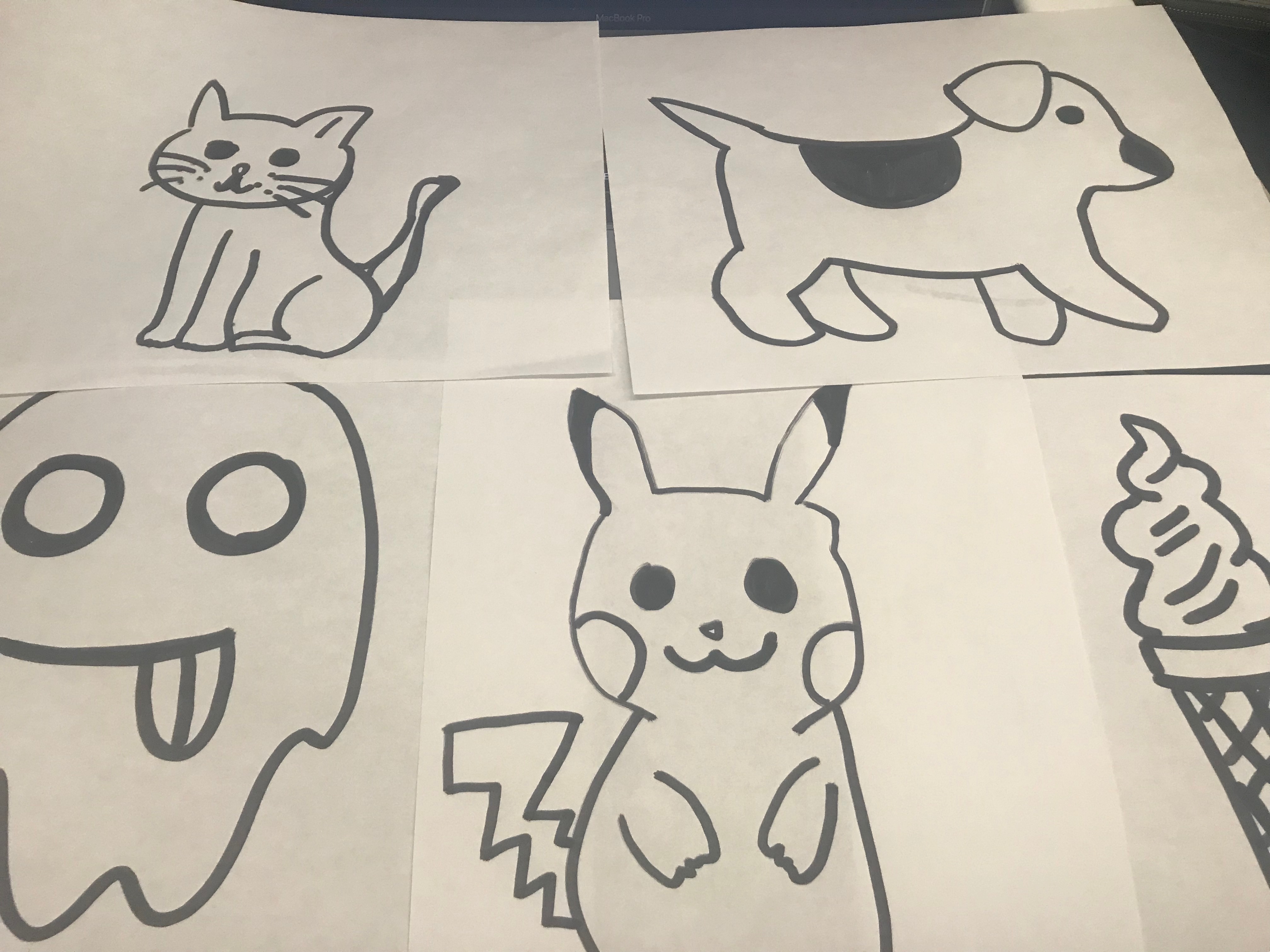

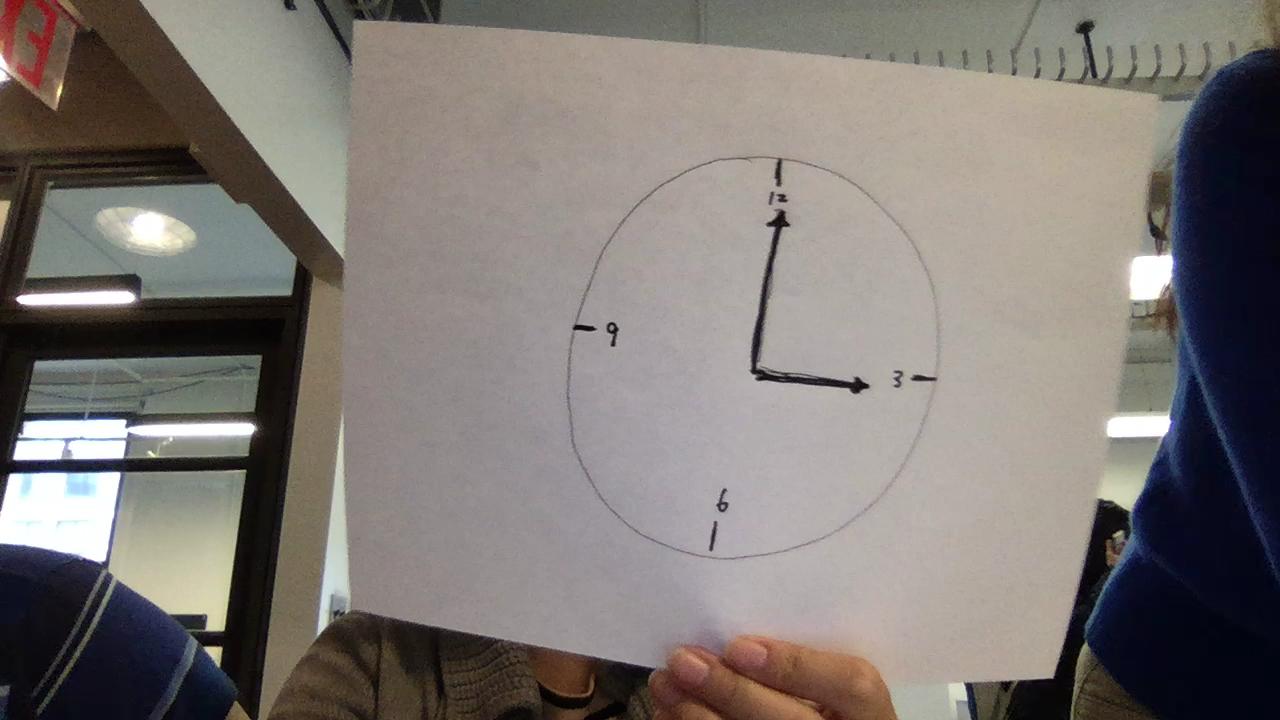

We experimented with different drawings and the accuracy of the vision model:

The Vision API gives different results based on the level of details:

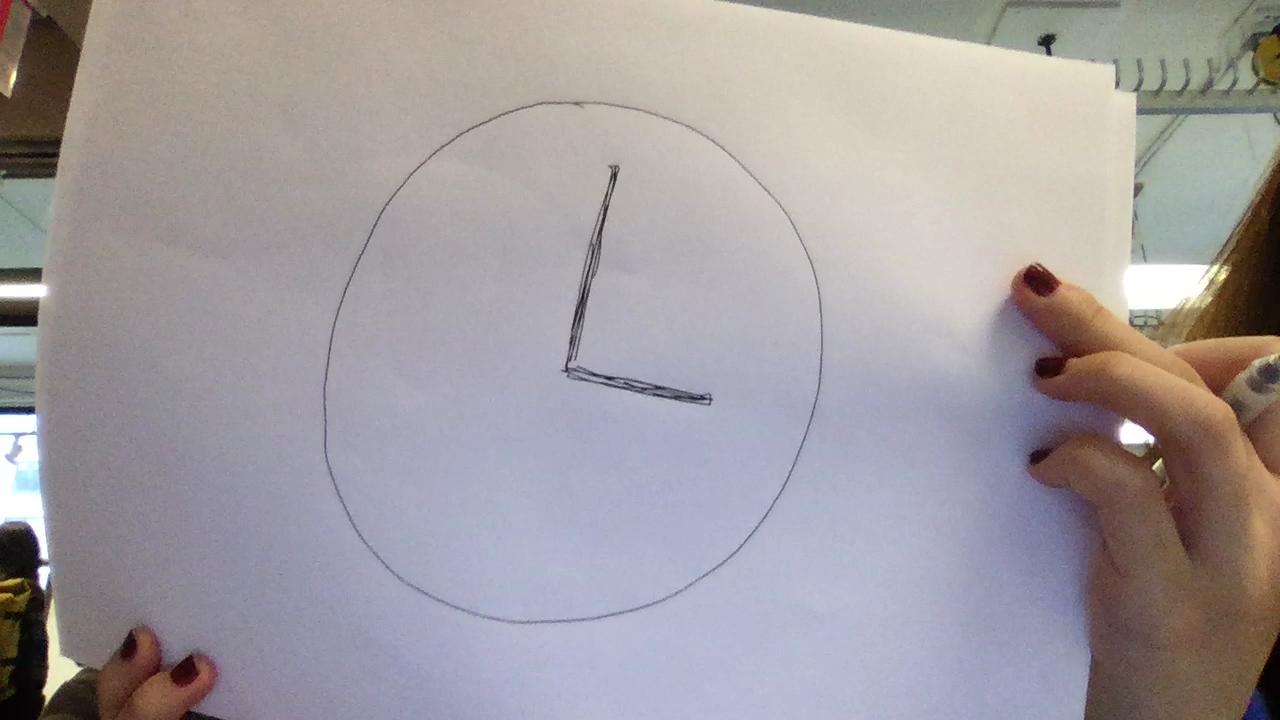

Compare two drawings of a clock:

Here is a video of the demo:

We had a hard time port this project to our cellphone, because displaying WebCamTexture and runtime 3D model not showing up in the build project. We tried Vuforia camera as well as it will build better.

In the future, we would like to train our model based on quick draw data, so it would do a better job at recognizing hand drawings.

For the loaded models, we are thinking it will be a pet or an tool. We want to give it more personality as it will request items and the user will need to draw and give them to it.